These AI-powered edits weren’t flawless, and most professional retouchers would want to step in and make some adjustments of their own afterwards, but they seemed like they would speed up many editing tasks. But in a virtual demo The Verge saw, the new tools delivered fast and good quality results (though we didn’t see the facial expression adjustment tool). But it’s always significant when techniques like these go from bleeding-edge experiments, shared on Twitter among those in the know, to headline features in consumer juggernauts like Photoshop.Īs always with these sorts of features, the proof will be in the editing, and the actual utility of neural filters will depend on how Photoshop’s many users react to them. They’re the sort of tools that have been turning up in papers and demos for years. Many of these filters are familiar to those who follow AI image editing.

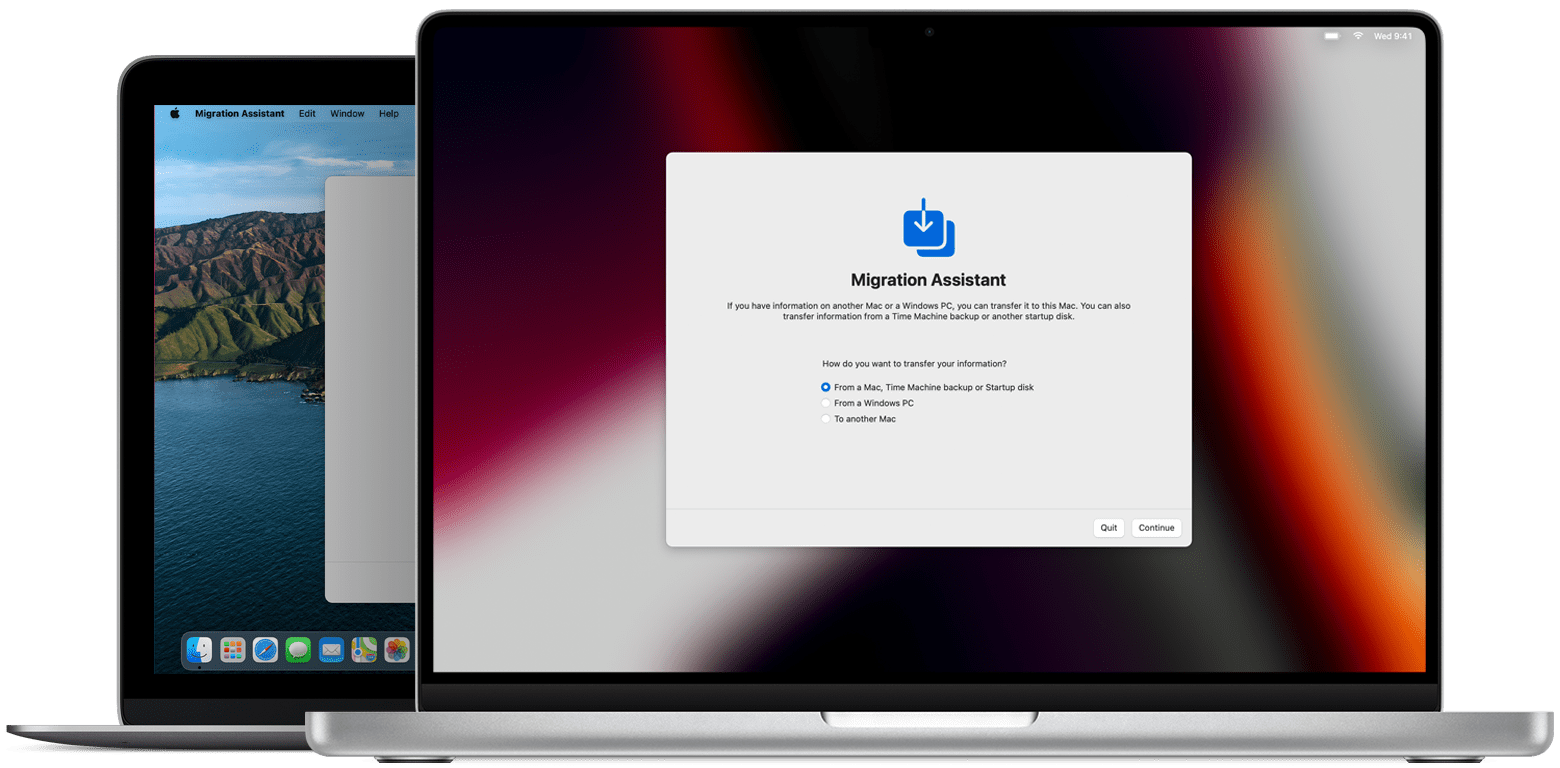

(The demo we saw was done on an old Mac Book Pro and was perfectly fast enough.)

Some of the processing is done locally and some in the cloud, depending on the computational demands of each individual tool, but each filter takes just seconds to apply. To achieve these effects, Adobe is harnessing the power of generative adversarial networks - or GANs - a type of machine learning technique that’s proved particularly adept at generating visual imagery.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed